Bandwidth of the Brain: A Rigorous Look at the Numbers Behind Neural Interfaces in 2025

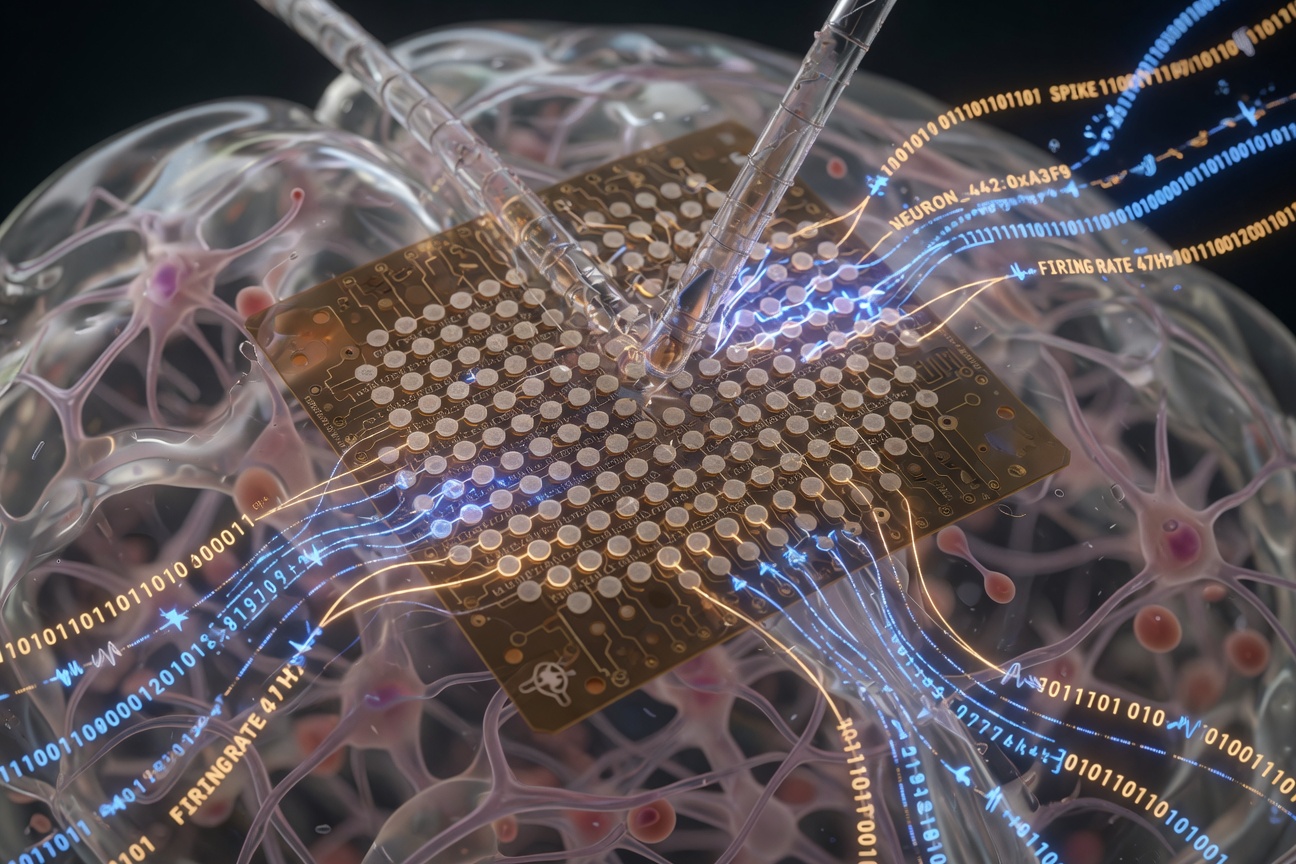

Think of the human brain as a data center running approximately 86 billion processing nodes, each firing at rates between 0.1 and 100 Hz, generating a total estimated internal bandwidth somewhere between 1 terabit and 1 petabit per second depending on how you count synaptic events. Now consider that the best commercially tested brain-computer interface available today captures, at peak performance, the activity of roughly 1,024 individual neurons simultaneously. That ratio is not a gap. It is a chasm. And the engineers and neuroscientists scrambling to close it are generating some of the most compelling experimental data in the history of applied neuroscience.

This is not a story about hope or hype. It is a story about benchmarks, and what they actually tell us about where the field stands heading into the second half of the 2020s.

The Channel Count Problem: More Electrodes, More Questions

The foundational metric of any BCI system is electrode count, expressed as the number of independent neural recording channels. For context: the Utah Array, a standard in academic research since the 1990s, offers 96 channels. Neuralink's N1 implant, which received its first human implantation in January 2024 under FDA Breakthrough Device designation, deploys 1,024 electrodes distributed across 64 flexible threads, each thinner than a human hair. That represents a roughly 10x increase in channel density over the previous gold standard, and independent analysis of the company's published data confirms usable signal acquisition from approximately 85 percent of those channels under optimal conditions.

But channel count alone is a misleading headline metric. Signal-to-noise ratio (SNR), impedance stability over time, and spatial resolution all contribute to what researchers call "effective information throughput," a composite benchmark that captures not just how many neurons you can monitor but how cleanly and persistently you can read them. On this more demanding measure, the numbers get harder to celebrate. Chronic implant studies across multiple research groups have documented impedance drift of 30 to 60 percent over 12-month periods, which progressively degrades signal quality. Neuralink's own February 2024 update on its first human patient acknowledged retraction of implanted threads from target cortical regions as a contributing factor to initial performance degradation, though the company reported subsequent software compensation strategies partially recovered lost cursor-control throughput.

"Electrode count is the horsepower figure on a car brochure. What matters for the driver is whether the transmission actually delivers that power to the wheels."

Information Transfer Rate: The Metric That Cuts Through the Marketing

Researchers have increasingly converged on Information Transfer Rate (ITR), measured in bits per minute, as the most honest cross-platform benchmark for BCI performance. ITR accounts simultaneously for speed, accuracy, and the number of possible outputs a system can distinguish, producing a single number that allows comparison across radically different hardware architectures.

The published ITR landscape in 2025 is revealing. State-of-the-art non-invasive EEG-based systems, which use electrode caps placed on the scalp and require no surgery, top out at approximately 50 to 70 bits per minute in controlled laboratory conditions, with real-world performance typically falling to 20 to 40 bits per minute due to motion artifacts and environmental interference. High-density ECoG systems, which involve placing electrode grids directly on the brain surface without penetrating tissue, have demonstrated ITRs in the 200 to 300 bits-per-minute range in research settings, though these require craniotomy and are used almost exclusively in epilepsy monitoring contexts. Fully implanted penetrating electrode arrays, including the Neuralink N1 platform, have shown peak ITRs above 400 bits per minute in Neuralink's published demonstrations of cursor control and text composition tasks. For comparison, an experienced typist on a physical keyboard generates information at a rate roughly equivalent to 60 to 80 bits per minute.

That last comparison carries an important implication: at peak performance, the current generation of invasive BCIs is already faster than conventional human-computer interaction for specific, well-defined motor output tasks. The question is no longer whether neural interfaces can theoretically outperform fingers on a keyboard. The question is whether they can do it reliably, comfortably, and safely enough across a broad population to justify the neurosurgical risk profile.

Decoding Accuracy Under Real-World Conditions

Laboratory benchmarks have a notorious tendency to collapse when patients take devices home. A 2024 multi-center trial examining BCI-assisted communication in ALS patients with implanted electrode arrays reported median at-home decoding accuracy of 78.3 percent for phoneme classification, compared to 91.2 percent under controlled lab conditions. That 13-percentage-point gap may not sound catastrophic, but for someone composing a message one phoneme at a time, an error rate approaching one in five interactions produces a functionally exhausting experience that erodes adoption even when the technology is nominally working.

Neuralink's publicly reported data for its first participant, Noland Arbaugh, showed cursor-control accuracy sufficient for unassisted computer use, chess, and gaming across months of recorded sessions. The company has not yet published peer-reviewed ITR or decoding accuracy statistics for its full participant cohort, which expanded to multiple implanted individuals by mid-2025. Academic reviewers have noted this absence, pointing out that individual variation in neural signal quality, cortical anatomy, and motor intention encoding means single-subject demonstrations, however impressive, carry limited statistical generalizability.

The methodological discipline required to evaluate these systems rigorously is itself evolving. BrainGate consortium researchers published a standardized BCI evaluation protocol in late 2024 that prescribes minimum trial counts, session conditions, and reporting requirements for ITR and accuracy claims. Adoption of this framework across industry players would represent a meaningful step toward the kind of reproducible benchmarking that allows confident comparison. Whether commercial entities with competitive reasons to control their data narrative will voluntarily conform to third-party evaluation standards remains an open empirical question.

The Non-Invasive Horizon: Catching Up Faster Than Expected

It would be a mistake to read the implant performance data as rendering non-invasive approaches obsolete. Two converging developments are reshaping the competitive landscape in ways that the raw electrode-count framing obscures. First, advances in AI-driven signal processing, particularly transformer-based neural decoders trained on large cross-subject datasets, have dramatically improved the usable signal extraction from low-density EEG systems. Published results from multiple groups in 2024 and early 2025 show AI-augmented EEG systems achieving ITRs 40 to 60 percent higher than the same hardware running earlier decoder architectures, without any change to the physical recording apparatus. The hardware did not improve. The interpretation did.

Second, a class of minimally invasive approaches occupying the middle ground between scalp EEG and penetrating implants is generating serious research momentum. Synchron's Stentrode, which is delivered endovascularly through blood vessels and sits within the superior sagittal sinus adjacent to motor cortex, has now accumulated multi-year follow-up data in ALS patients showing stable performance without the thread retraction issues that have affected some penetrating electrode designs. The tradeoff is lower spatial resolution and channel count compared to penetrating arrays, but the risk profile is substantially more favorable. Peer-reviewed data published in 2024 reported Stentrode patients achieving 22 to 44 selections per minute in communication tasks, a modest but meaningful real-world throughput figure maintained consistently across home-use conditions.

What the Numbers Demand Next

Synthesizing the available benchmark data produces a picture that is simultaneously more encouraging and more complicated than either the booster or the skeptic camp tends to admit. Implanted high-density systems genuinely do deliver ITR and decoding capability that exceeds alternative input modalities for specific user populations. That is an empirically supported statement. But chronic stability, multi-user generalizability, and real-world versus lab performance gaps represent genuine and unresolved engineering challenges that current marketing materials rarely quantify honestly.

The field needs several things that only rigorous, standardized, independently verified experimental data can provide. It needs multi-year implant stability curves across diverse patient populations. It needs head-to-head ITR comparisons conducted under identical conditions across competing platforms. It needs statistical power calculations that justify confidence in decoding accuracy claims rather than cherry-picked demonstration sessions.

Neuralink, Synchron, Precision Neuroscience, and the academic BrainGate consortium are all accumulating exactly this kind of data. Some of it is beginning to enter peer review. More will follow as trial cohorts grow and follow-up periods lengthen. The honest assessment is that the race to close the gap between the brain's petabit internal bandwidth and what silicon can capture is genuinely accelerating, driven by algorithmic gains as much as hardware innovation. Whether the destination is a narrow medical device or a broadly deployed human augmentation tool depends less on the boldness of any entrepreneur's vision than on what the next several years of rigorous experimental data actually show. Those numbers, not the press releases, will write the real story.